The goal of this test is to see how PostgreSQL behaves with heavy insert load of logs.

The PostgreSQL (15.8 (Debian 15.8-0+deb12u1)) is running within KVM VM with 2 cores and further restricted via cgroupv2 cpu.max parameter set to “25 100“, to give a quarter of core performance distributed over to given cores.

Input log-set total size is around 60GiB of random log data, and a Rust client performing batch inserts to Elasticsearch.

Findings

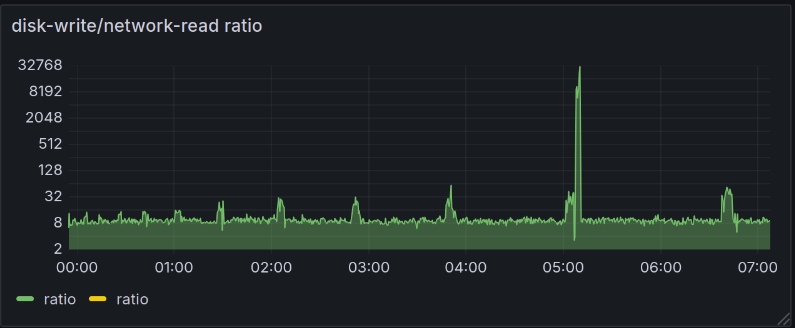

PostgreSQL insert performance was less than was expected. The insert started at rate of almost 2 MiB, and then gradually fell with time. I don’t have the rate at graph, but graph below, shows total NIC rate which shows that read rate is slowly reducing over time. The load was mostly limited by IO, write rate was quite high when compared to other databases. One interesting thing is that when we look at the Disk R/W Data, we can see that write rate was slowly getting slower, as read rate increased. This was most likely the result of indexes created on log table.

One main give-away would be that disk write rate / network read rate ratio is quote high. This is shown in second graph.

Log table was created as unlogged, as logged table was again, slower to the point it was pointless to test it.

I did not futher try to tweak DB performance because, we can see that disk write rate was already quite high.

I doubt that anything could be done to fix this since it’s an important part of transactional database.

Setup

Sender configuration

A single Rust client sending logs in sequence. The writes are not parallelized.

use std::cell::RefCell;

use std::fs::File;

use std::io::{BufRead, BufReader};

use std::ops::Sub;

use std::pin::pin;

use anyhow::{Result, anyhow};

use chrono::{DateTime, Utc};

use itertools::Itertools;

use tokio_postgres::NoTls;

use chrono::Local;

fn file_paths() -> Vec<String> {

vec![

"/log/app.log"

].into_iter().map(|e| e.to_string()).collect()

}

#[derive(Debug)]

struct LogRecord {

timestamp: DateTime<Utc>,

msg: String,

}

struct LogReader {

log_files: Vec<String>,

log_file_pos: usize,

reader: RefCell<Option<BufReader<File>>>,

now: DateTime<Local>,

bytes_read: usize

}

impl LogReader {

fn new(log_files: Vec<String>) -> LogReader {

Self { log_files, log_file_pos: 0, reader: RefCell::new(None), now: Local::now() , bytes_read: 0}

}

fn read_batch(&mut self, size: usize) -> Result<Vec<LogRecord>> {

let mut recs = vec![];

if self.reader.borrow().is_none() {

if self.log_file_pos >= self.log_files.len() {

return Ok(recs);

}

let path = &self.log_files[self.log_file_pos];

let reader = BufReader::with_capacity(1024 * 1024 * 16, File::open(path)?);

self.reader.borrow_mut().replace(reader);

self.log_file_pos += 1;

}

loop {

let mut line = String::new();

let len = self.reader.borrow_mut().as_mut().unwrap().read_line(&mut line)?;

if len == 0 {

break;

}

self.bytes_read += len + 1 /* - for newline */;

let mut iter = line.split(" ").into_iter();

let timestamp = iter.next().ok_or(anyhow!("Bad file"))?;

let message = iter.collect_vec().join(" ");

let time = chrono::NaiveDateTime::parse_from_str(timestamp, "%Y-%m-%dT%H:%M:%S.%3f")?;

let time = time.and_utc();

let time = time.with_timezone(&Utc);

recs.push(LogRecord {

timestamp: time,

msg: message,

});

let now = Local::now();

if now.sub(self.now).num_milliseconds() > 1000 {

let ms = now.sub(self.now).num_milliseconds();

println!("in {ms} written {} bytes, {} KiB, {} MiB read", self.bytes_read, self.bytes_read/1024, self.bytes_read/1024/1024);

self.bytes_read = 0;

self.now = now;

}

if recs.len() == size {

return Ok(recs);

}

}

self.reader.borrow_mut().take();

recs.append(&mut self.read_batch(size - recs.len())?);

Ok(recs)

}

}

#[tokio::main]

async fn main() {

let paths = file_paths();

let mut reader = LogReader::new(paths);

let (mut client, connection) =

tokio_postgres::connect("host=192.168.122.124 user=postgres password=postgres dbname=postgres", NoTls).await.unwrap();

tokio::spawn(async move {

connection.await.unwrap();

});

loop {

let z = reader.read_batch(2000).unwrap();

let trans = client.transaction().await.unwrap();

let sink = trans.copy_in("COPY logs (time, log) from STDIN BINARY ;").await.unwrap();

let writer = tokio_postgres::binary_copy::BinaryCopyInWriter::new(sink, &vec![tokio_postgres::types::Type::TIMESTAMPTZ, tokio_postgres::types::Type::TEXT]);

let mut writer = pin!(writer);

for r in z {

writer.as_mut().write(&[&r.timestamp, &r.msg]).await.unwrap();

}

writer.finish().await.unwrap();

trans.commit().await.unwrap();

}

}

PostgreSQL table DDL

create unlogged table logs

(

id serial4 not null primary key,

time timestamptz not null,

log text not null,

msg tsvector generated always as ( to_tsvector('english', log) ) STORED

);

create index if not exists logs_msg on logs using gin (msg);

create index if not exists logs_ts on logs using btree (time);KVM Configuration

Typical 2 core setup with 2 GiB of RAM, but with following agumentation.

Block device limits

<iotune>

<total_bytes_sec>125829120</total_bytes_sec>

<total_iops_sec>3000</total_iops_sec>

</iotune>Network device limits

<bandwidth>

<inbound average="12000" peak="12000" burst="12000"/>

<outbound average="12000" peak="12000"/>

</bandwidth>